Every live stream you watch — a Sunday sermon on Facebook, a cricket match on a branded app, a product launch on LinkedIn — depends on a set of invisible rules running behind the scenes. These rules are video streaming protocols: standardized methods for transmitting video data over the internet. They determine how video and audio data gets packaged, sent across networks, and reassembled on a viewer’s screen. Without the right protocol, streams buffer, freeze, or fail entirely. Latency spikes ruin interactive moments. Poor video quality drives viewers away. And broadcasting to multiple platforms at once becomes unreliable.

The right protocol choice is the difference between a professional broadcast and a broken one. But most broadcasters never think about protocols — they use whatever default their encoder provides. That’s a problem, because different protocols solve different problems.

This guide will break down every major video streaming protocol used in live broadcasting today, compare their strengths and trade-offs, and help you choose the right protocol for your specific streaming setup.

What Are Video Streaming Protocols?

Video streaming protocols are standardized sets of rules that define how live and on-demand video data is packaged into small chunks, transmitted across networks, and delivered to end viewers. They operate at the transport layer of a broadcast workflow. Every time you go live on YouTube Live or stream a worship service to your church website, protocols govern every packet of data moving between your encoder, your streaming server, and your audience’s devices.

Protocols are not the same thing as codecs. A codec — like H.264 or H.265 — handles compression. It determines how video and audio data is shrunk down to a manageable size. A protocol handles transport. It determines how that compressed data travels from point A to point B. Codecs ride inside protocols, the way cargo rides inside a truck.

One critical distinction separates all streaming protocols into two categories:

- Ingest protocols (also called contribution protocols) — used to send video from an encoder or camera to a server. Examples: RTMP, SRT, WHIP, RTSP.

- Delivery protocols (also called distribution protocols) — used to send video from a server to viewers. Examples: HLS, MPEG-DASH, WebRTC.

Understanding this split is the foundation for every protocol decision you’ll make. Protocols are largely divided into adaptive HTTP-based methods for high-quality playback and low-latency protocols for interactive live streaming.

Ingest vs. Delivery Protocols: Understanding the Difference

Every live video streaming workflow has two legs. The first leg — sometimes called the “first mile” — moves video from your source (camera, encoder, or browser) to a media server or cloud platform. The second leg — the “last mile” — moves video from that server to your viewers’ devices.

| Protocol | Type | Primary Use | Latency Profile | Transport Layer |

|---|---|---|---|---|

| RTMP | Ingest | Encoder-to-server contribution | Low (1–3 seconds) | TCP |

| SRT | Ingest (+ some delivery) | Remote production, unreliable networks | Very low (sub-second to 2s) | UDP |

| WHIP | Ingest | Browser-based ultra-low-latency ingest | Ultra-low (200–500ms) | HTTP/WebRTC |

| RTSP | Ingest/Control | IP cameras, video surveillance | Low | TCP/UDP |

| HLS | Delivery | Scalable viewer delivery, OTT, VOD | High (15–30s) / Medium (2–5s with LL-HLS) | HTTP |

| MPEG-DASH | Delivery | Adaptive delivery, smart TVs | High (15–30s) / Medium (2–5s) | HTTP |

| WebRTC | Delivery/Interactive | Video chat, video conferencing, real time communication | Ultra-low (200–500ms) | UDP (peer-to-peer) |

Some protocols can serve both roles. SRT works for both contribution and distribution in certain configurations. Web real time communication (WebRTC) handles both ingest and delivery but is primarily used for interactive streaming and peer-to-peer scenarios. These overlaps matter — and most guides on understanding streaming protocols fail to explain them clearly.

RTMP (Real-Time Messaging Protocol)

Real-Time Messaging Protocol (RTMP) was originally developed by Macromedia — later acquired by Adobe — to transfer audio and video files between a streaming server and the Adobe Flash Player. It operates over the transmission control protocol (TCP) on port 1935. Flash is gone. Adobe deprecated it in 2020. But RTMP didn’t die with it. The time messaging protocol RTMP shifted its role: it moved from being both an ingest and delivery protocol to being primarily an ingest protocol. Today, it remains the default way encoders send live video to streaming platforms.

RTMP works by maintaining a persistent connection between your encoder and the server. It breaks video and audio data into small packets and transmits them with minimal overhead. This persistent connection keeps latency low — typically 1 to 3 seconds on the ingest side. RTMPS, the secure variant, wraps the same connection in TLS encryption for secure streaming during transport.

RTMP does have limitations. Because it runs on TCP, it’s vulnerable to congestion on unreliable networks. TCP requires every packet to arrive in order — if one packet is lost, everything behind it waits. RTMP also lacks native adaptive bitrate streaming. It’s limited to H.264 video and AAC audio codecs. And it’s no longer suitable as a delivery protocol since web browsers dropped Flash support.

Strengths:

- Universal platform support — YouTube, Facebook, Twitch, LinkedIn, Twitter, and custom RTMP destinations all accept it

- Every major encoder supports it: OBS, vMix, Wirecast, Larix

- Low-latency ingest (1–3 seconds)

- Simple setup — just a server URL and stream key

Limitations:

- TCP-based — struggles on congested or unreliable networks

- No native encryption (requires RTMPS for TLS)

- Limited to H.264/AAC codecs

- Not viable for delivery to modern web browsers

Despite the rise of modern streaming protocols, RTMP remains the most popular streaming protocol for ingest. The real time messaging protocol is effective for live streaming scenarios where real-time delivery is critical, maintaining persistent low-latency connections between encoder and server. For multistreaming to 30+ platforms — YouTube, Facebook, Twitch, LinkedIn, and dozens of custom RTMP destinations — RTMP is the common denominator that every platform accepts.

SRT (Secure Reliable Transport)

The secure reliable transport (SRT) protocol was developed by Haivision and open-sourced in 2017 through the SRT Alliance. It is a UDP-based video transport protocol designed to deliver high-quality, low-latency video across unpredictable networks — including the public internet. Where RTMP struggles on congested connections, secure reliable transport SRT was built specifically to handle those conditions.

SRT runs on the user datagram protocol (UDP) instead of TCP. UDP doesn’t wait for lost packets the way TCP does. Instead, SRT adds its own error correction layer called ARQ — Automatic Repeat reQuest. Here’s the simple version: instead of resending everything when a packet goes missing, SRT only resends the specific packets that were lost. This keeps latency low while maintaining reliable transport of video data.

SRT also includes built-in encryption using AES-128/256 cipher encryption to protect video streams from unauthorized access and tampering. No additional configuration needed — encryption is native to the protocol. And SRT supports both H.264 and H.265 (HEVC) codecs, giving it a compression advantage over RTMP.

Key technical specs:

- Transport: UDP

- Encryption: AES-128 / AES-256 (native)

- Error correction: ARQ (Automatic Repeat reQuest)

- Codec support: H.264, H.265/HEVC

- Latency: Adjustable — typically sub-second to 2 seconds

SRT utilizes advanced error correction techniques and encryption to ensure reliable and secure low-latency video delivery. The protocol is gaining popularity in the broadcasting industry due to its reliability and ability to securely transport video streams. It’s ideal for remote production, cross-country contribution feeds, and streaming IP cameras directly to the cloud over WAN connections. OBS, vMix, and Larix Broadcaster all support SRT output.

According to the SRT Alliance, the open-source consortium now includes over 600 member organizations — a signal of how quickly the broadcasting industry is adopting this protocol for professional workflows.

HLS (HTTP Live Streaming)

HTTP Live Streaming (HLS) is the most widely used video streaming protocol for delivery. Developed by Apple in 2009, HLS http live streaming is compatible with nearly every device — iOS, Android, smart TVs, web browsers, set-top boxes — allowing for broad audience reach across the entire streaming world.

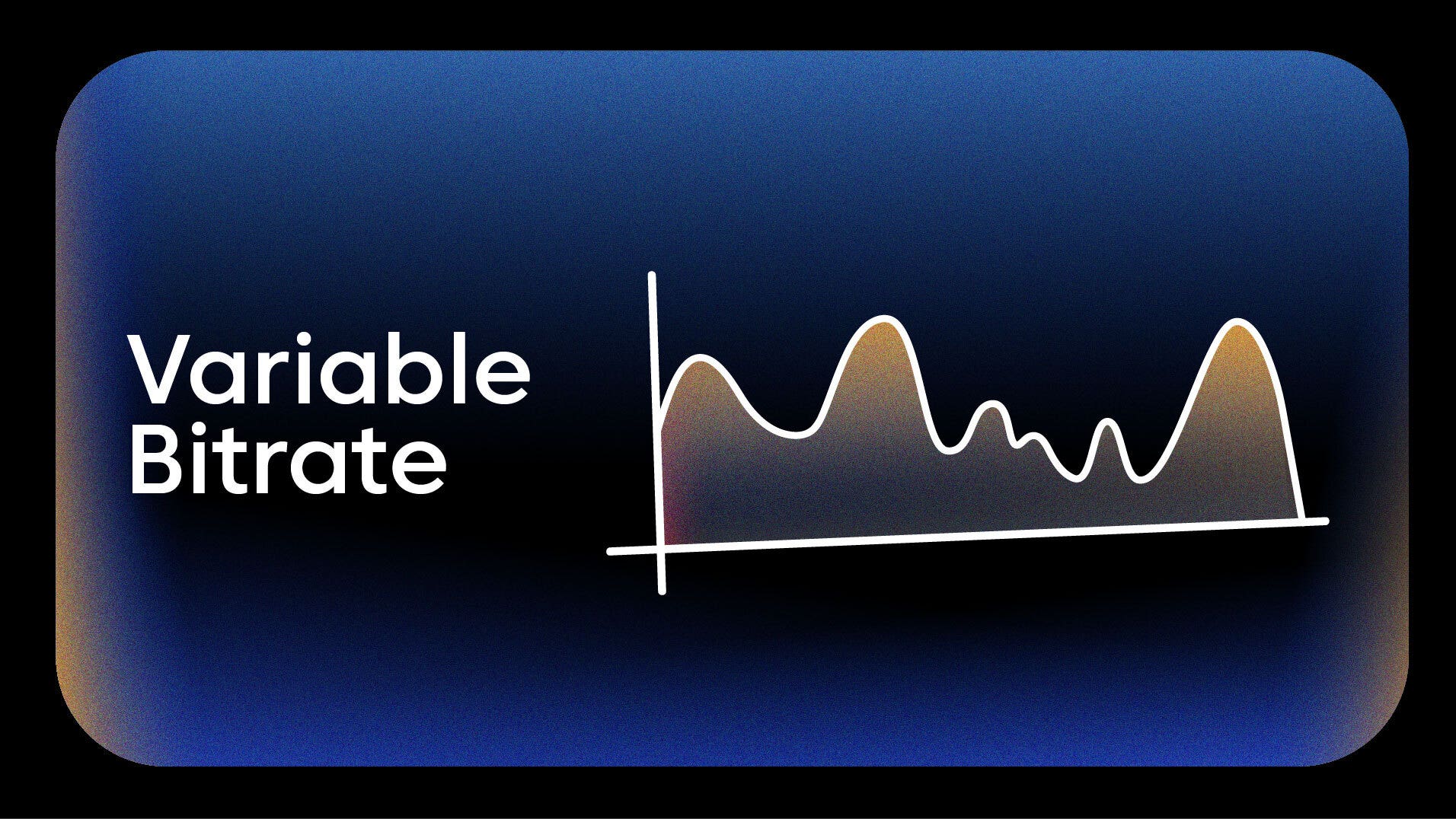

HLS works by breaking a video stream into small segments, typically 6 to 10 seconds each. A transcoder creates multiple quality renditions of each segment — say, 1080p, 720p, 480p, and 360p. A manifest file (called an M3U8 playlist) tells the video player which video segments are available and at what quality levels. The player then downloads segments one at a time, dynamically switching between quality levels based on the viewer’s internet connection speed.

How HLS delivery works (step by step):

- Your encoder sends live video to a server via an ingest protocol (RTMP or SRT).

- The server transcodes the stream into multiple quality renditions (adaptive bitrate streaming).

- A segmenter breaks each rendition into short .ts video segments.

- The M3U8 manifest file lists all available segments and quality levels.

- The viewer’s player reads the manifest, monitors bandwidth, and switches quality automatically for buffer-free playback.

This process is what makes http live streaming HLS the industry standard for large-scale delivery. It works over standard HTTP/HTTPS, which means it passes through firewalls without issues and runs on existing CDN infrastructure. Scalability is a core strength — HTTP-based protocols are the most effective at handling thousands or millions of concurrent users. HLS powers the majority of OTT and video on demand delivery worldwide.

The main limitation of HLS is latency. Standard HLS introduces 15 to 30 seconds of delay because of segment buffering. That’s fine for delivering video to large audiences watching a concert or a sermon. It’s not fine for interactive streaming like live auctions or real-time Q&A.

Low-Latency HLS (LL-HLS) addresses this gap. Apple’s updated specification reduces delivery latency to 2 to 5 seconds by using partial segments and blocking playlist reloads. CDN support for LL-HLS is growing, making sub-5-second delivery increasingly practical at scale.

HLS also works as an ingest method in some configurations. HLS PULL ingest allows platforms to pull an existing HLS feed and redistribute it — useful for restreaming content that’s already being delivered via HLS.

Strengths:

- Near-universal device and browser support

- Native adaptive bitrate streaming — adjusts video quality in real time based on internet speed

- Runs over HTTP/HTTPS — CDN-friendly, firewall-friendly

- Massive scalability for large audiences

- Supports H.264, H.265, and emerging AV1 codecs

Limitations:

- High latency with standard HLS (15–30 seconds)

- LL-HLS reduces latency but requires specific CDN support

- Not designed as an ingest protocol (though HLS PULL exists)

Adaptive streaming is best for on-demand content and public-facing live events where high quality is prioritized over real-time interaction. For adaptive bitrate delivery across all devices, HLS remains the protocol that powers the modern streaming experience.

WHIP (WebRTC-HTTP Ingestion Protocol)

WHIP is the newest major protocol in the live streaming landscape — and it solves a problem that has frustrated developers for years. Before WHIP, every platform that accepted WebRTC-based ingest used its own proprietary protocol for signaling. There was no standard way to send a WebRTC stream to a media server. WHIP changes that.

WHIP (WebRTC-HTTP Ingestion Protocol) is an IETF-standardized protocol that provides a simple, HTTP-based signaling mechanism for ingesting live video into a media server using WebRTC. It works through a single HTTP POST request to establish a WebRTC session. That’s it. Any WebRTC-capable browser or application can send live video to any WHIP-compatible server without custom integration code.

The result is ultra-low latency communication — typically 200 to 500 milliseconds from source to server. WHIP supports modern codecs including VP8, VP9, H.264, and the emerging AV1. Because it’s browser-native, WHIP eliminates the need to install encoder software for basic live production.

OBS Studio added WHIP support in version 30. Cloudflare Stream supports it. And professional live streaming platforms — including those offering cloud-based live switching from the browser — are adopting WHIP as a primary ingest option. WHIP is particularly relevant for remote guest contributions, quick-setup broadcasts, and cloud-based production workflows where installing dedicated software isn’t practical.

The IETF specification for WHIP (documented in RFC draft-ietf-wish-whip) standardizes what was previously a fragmented ecosystem of proprietary WebRTC signaling implementations.

Other Protocols Worth Knowing: RTSP, WebRTC, MPEG-DASH & CMAF

Beyond the four primary protocols above, several other protocols play important roles in specific parts of the streaming workflow.

RTSP (Real-Time Streaming Protocol)

The real time streaming protocol (RTSP) is a network control protocol designed for establishing and controlling media sessions between a client and a server. The time streaming protocol RTSP is not a transport protocol itself — it doesn’t carry video data. Instead, it works alongside RTP (Real-Time Transport Protocol) to control streaming media servers and manage playback commands like play, pause, and stop.

RTSP’s primary modern use case is IP cameras and video surveillance systems. Most RTSP-supported security cameras output an RTSP feed that can be pulled into a streaming platform for redistribution or cloud recording. Platforms that support RTSP streaming from IP cameras can ingest these feeds and broadcast them to social platforms or embed them on websites.

Despite the rise of newer protocols, RTSP remains useful for communication between input devices and media servers — particularly in surveillance and enterprise video workflows.

WebRTC (Web Real-Time Communication)

WebRTC is an open-source framework — not a single protocol but a collection of protocols (ICE, DTLS, SRTP) — that enables real-time audio and video streaming directly between web browsers. It features built-in encryption for secure communication and delivers sub-second latency through peer-to-peer connections.

WebRTC is a low latency protocol that relies on peer-to-peer streaming, making it ideal for real-time applications like video conferencing, video chat, and live chats. It powers Google Meet, Discord, and most browser-based video call applications. For broadcast-scale delivery, WebRTC faces scalability challenges — peer-to-peer doesn’t scale to thousands of viewers without SFU (Selective Forwarding Unit) server architectures.

WHIP (covered above) standardizes WebRTC’s ingest signaling, making web real time communication increasingly relevant for professional broadcasting — not just video calls.

MPEG-DASH (Dynamic Adaptive Streaming over HTTP)

Dynamic adaptive streaming over HTTP (MPEG-DASH) is an open-source standard (ISO/IEC 23009-1) for adaptive streaming over HTTP. It functions similarly to HLS — breaking video into segments and using a media presentation description (MPD) manifest file to enable adaptive bitrate streaming protocol behavior. MPEG-DASH is an open-source adaptive bitrate streaming protocol that allows for the delivery of high-quality video by dynamically adjusting the bitrate based on the viewer’s bandwidth and device capabilities.

The key difference from HLS: DASH is codec-agnostic and not tied to a single vendor. It supports H.264, H.265, VP9, AV1, and others without restriction. DASH is widely supported on Android devices, smart TVs, and set-top boxes. It’s less dominant on iOS (where HLS is native), but both protocols serve the same fundamental purpose — scalable adaptive streaming delivery.

Both HTTP Live Streaming (HLS) and dynamic adaptive streaming over HTTP (MPEG-DASH) are widely recognized protocols that utilize adaptive bitrate streaming to optimize video delivery for different devices and network conditions.

CMAF (Common Media Application Format)

CMAF is a standardized container format — not a protocol, strictly speaking — that allows a single set of video segments to work with both HLS and DASH. Before CMAF, content providers had to encode and store separate segment sets for each protocol. CMAF eliminates that duplication, reducing encoding costs and storage requirements.

CMAF is increasingly adopted by CDNs and OTT platforms that need to deliver video content across devices that expect different protocols. It’s a behind-the-scenes efficiency gain that most viewers never see but that significantly impacts delivery infrastructure costs. Microsoft smooth streaming, an earlier adaptive protocol from Microsoft, has largely been superseded by DASH and HLS with CMAF bridging the gap between them.

Video Streaming Protocol Comparison: RTMP vs. SRT vs. HLS vs. WHIP

This table compares the four primary protocols covered in this guide across the attributes that matter most for broadcasting decisions.

| Attribute | RTMP | SRT | HLS | WHIP |

|---|---|---|---|---|

| Type | Ingest | Ingest (+ some delivery) | Delivery | Ingest |

| Transport | TCP | UDP | HTTP/HTTPS | HTTP (WebRTC) |

| Latency | Low (1–3s) | Very low (sub-second to 2s) | High (15–30s standard) / Medium (2–5s LL-HLS) | Ultra-low (200–500ms) |

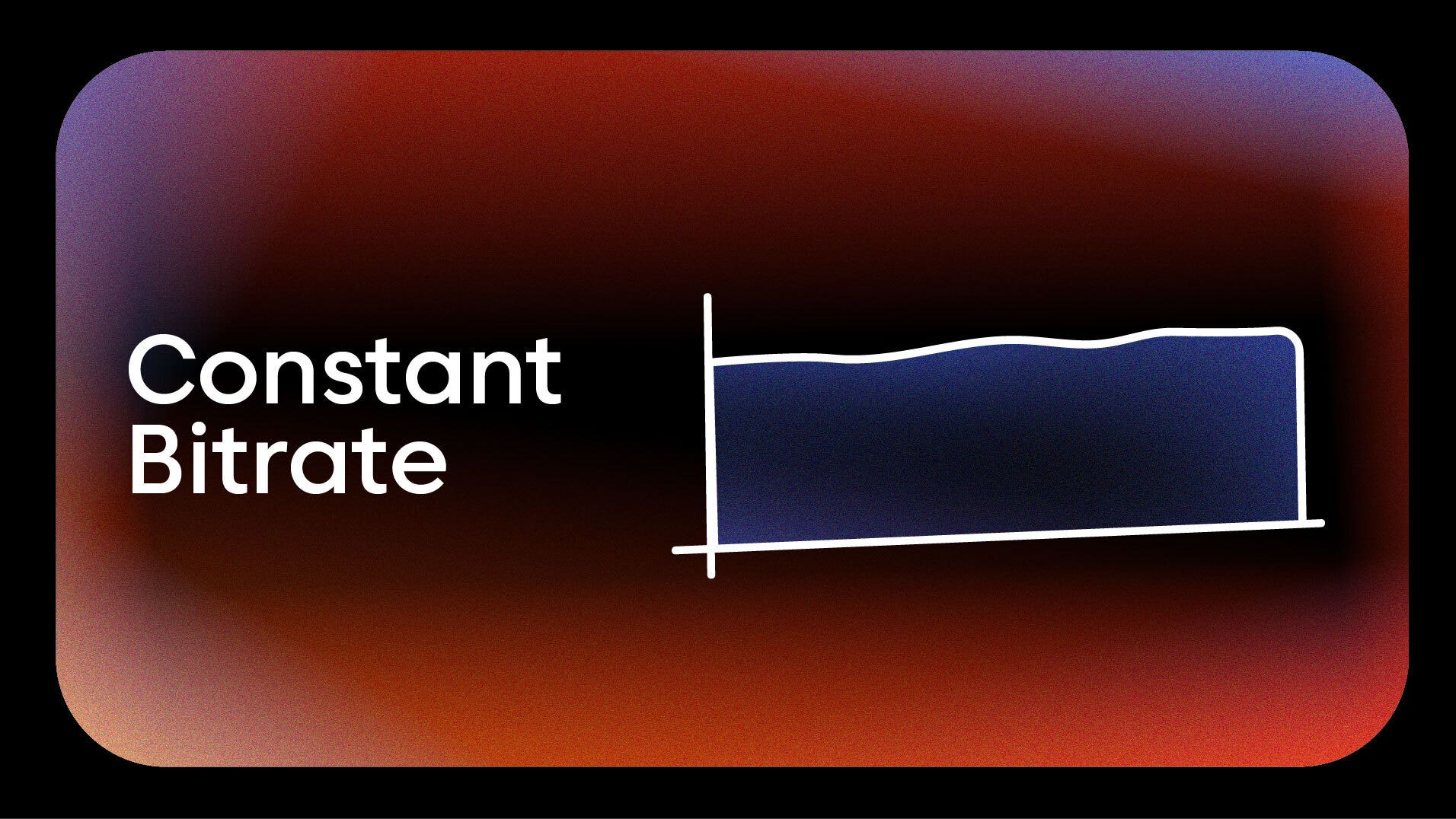

| Adaptive Bitrate | No | No (handled by receiver) | Yes (native) | No |

| Encryption | RTMPS (TLS) | AES-128/256 (native) | HTTPS | DTLS/SRTP (native) |

| Codec Support | H.264, AAC | H.264, H.265 | H.264, H.265, AV1 | VP8, VP9, H.264, AV1 |

| Browser Support | No | No | Yes (universal) | Yes (native) |

| Primary Use Case | Universal ingest to all platforms | Remote production, unreliable networks | Scalable viewer delivery, OTT, VOD | Browser-based ingest, interactive |

| Platform Support | Very high | Growing | Very high | Growing |

| Best For | Multistreaming | Professional contribution | Large-audience delivery | No-software ingest |

The most important takeaway from this table: most professional live streaming workflows use one protocol for ingest and a different protocol for delivery. The most common pipeline looks like this:

RTMP or SRT (ingest) → Cloud transcoding → HLS (delivery)

Your encoder sends a single stream via RTMP or SRT to a streaming platform. The platform transcodes it into multiple quality renditions. Viewers receive it via HLS with adaptive bitrate streaming that adjusts video quality based on each viewer’s internet speed and device capability.

Choose RTMP when universal platform compatibility is the priority — especially for multistreaming. Choose SRT when you’re streaming over unreliable networks or across long distances. Choose HLS for scalable delivery to large audiences with varied network conditions. Choose WHIP for browser-based, ultra-low-latency ingest without encoder software.

Platforms that support RTMP, SRT, WHIP, and HLS PULL ingest give broadcasters the flexibility to pick the right protocol for each situation rather than being locked into a single option.

How to Choose the Right Streaming Protocol for Your Use Case

Protocol selection depends on what you’re broadcasting, who you’re reaching, and what infrastructure you’re working with. Here’s how the choice maps to specific use cases.

For Multistreaming to Social Platforms

RTMP is the only ingest protocol universally accepted by YouTube, Facebook, Twitch, LinkedIn, Twitter, and custom RTMP destinations. When you need to multistream to 30+ platforms at once, RTMP is the common language every platform speaks. Send a single RTMP feed to a multistreaming service, and it handles redistribution to every destination.

For Church & Worship Services

Churches typically multistream Sunday services to Facebook, YouTube, and their own website. RTMP handles the multi-platform ingest. HLS delivery via an embeddable player on the church website ensures congregants get buffer-free playback regardless of their connection. If the church streams from a remote location with spotty internet, SRT ingest provides more reliable transport than RTMP.

For Corporate Events & Internal Communications

Corporate town halls, training sessions, and investor meetings need security and reliability. SRT provides encrypted, reliable contribution from event venues. HLS handles scalable delivery to employees. For internal corporate streaming where bandwidth is limited, an enterprise CDN (eCDN) uses peer-to-peer delivery to minimize local network consumption. Backup and failover streaming — broadcasting to primary and backup streams with automatic switchover — adds another layer of reliability.

For Sports Broadcasting

Sports broadcasts often originate from venues with unpredictable internet. SRT handles that contribution leg reliably. HLS with adaptive bitrate delivers the video feed to viewers on varied connections — from fiber to mobile data. Geo-blocking for regional rights management is enforced at the delivery layer, restricting access by country to comply with broadcasting agreements.

For OTT Platform Builders

Anyone launching a branded streaming service — a Netflix-style app on iOS, Android, Roku, Apple TV, Fire TV — needs HLS as the primary delivery protocol. HLS is natively supported on every major OTT device. Adaptive streaming protocols are non-negotiable for OTT — viewers expect quality video that adjusts to their connection without manual intervention. A white-label OTT app builder handles the delivery infrastructure so you can focus on content.

For Browser-Based Production

WHIP enables ingest directly from a web browser — no encoder software required. This is ideal for cloud-based live production where you’re switching cameras, adding overlays, and mixing sources from a browser interface. Remote guests can contribute via WHIP without installing anything. The low latency communication WHIP provides (200–500ms) keeps interactions natural and responsive.

How to Set Up RTMP and SRT Streaming in OBS Studio

OBS Studio is the most widely used free encoder for live streaming. It supports RTMP, SRT, and WHIP ingest natively. Here’s how to configure the two most common ingest protocols.

Setting Up RTMP in OBS

- Open OBS Studio and go to Settings → Stream.

- Set the Service dropdown to Custom.

- Enter your RTMP Server URL (format: rtmp://live.example.com/live).

- Enter your Stream Key in the Stream Key field.

- Click Apply, then click Start Streaming.

That’s it for basic RTMP. Your encoder is now sending video data to your streaming server. For multistreaming to multiple platforms, you’ll need a platform that takes your single RTMP feed and redistributes it — connecting OBS to a multistreaming platform handles this automatically.

Setting Up SRT in OBS

- Open OBS Studio and go to Settings → Stream.

- Set the Service dropdown to Custom.

- In the Server field, enter the SRT URL: srt://[server]:[port]?streamid=[id]&latency=[ms].

- Leave the Stream Key field blank — SRT uses URL parameters instead.

- Under Settings → Output, confirm your encoder is set to x264 or a hardware encoder with H.264 or H.265.

- Click Apply, then click Start Streaming.

SRT setup requires the full URL with parameters. The latency parameter (in milliseconds) controls the buffer — lower values mean less delay but less error correction tolerance. Start with 200–500ms for local networks and 1000–2000ms for long-distance or unreliable connections.

Streaming Protocol Security: Encryption and Access Control

Not all video protocols offer the same level of security. The protocol you choose for ingest and delivery determines your baseline encryption — and additional application-layer controls fill the gaps.

| Protocol | Native Encryption | Encryption Type | Secure by Default? |

|---|---|---|---|

| RTMP | No | None (unencrypted) | No |

| RTMPS | Yes | TLS | Yes |

| SRT | Yes | AES-128 / AES-256 | Yes |

| HLS | Over HTTPS | TLS (transport) | Yes (over HTTPS) |

| WHIP/WebRTC | Yes | DTLS + SRTP | Yes |

| RTSP | No | None (unencrypted) | No |

SRT provides the strongest native encryption for ingest — AES-256 is the same standard used by governments and financial institutions. RTMP transmits data unencrypted by default; you need RTMPS for TLS protection. WebRTC (and by extension WHIP) includes DTLS and SRTP encryption natively, making it inherently secure for low latency communication.

Beyond protocol-level encryption, professional streaming platforms add application-layer security for controlling media sessions and access: password protection, geo-blocking, and domain referrer restrictions. Password protection limits access to authorized viewers. Domain referrer protection ensures your embeddable player only works on approved websites. Geo-blocking restricts viewing by country — critical for sports broadcasting rights management and region-locked video content.

For paid live streaming content behind a paywall, layered security is essential. Combine encrypted ingest (SRT or RTMPS) with encrypted delivery (HLS over HTTPS) and application-layer access controls for the most secure streaming setup.

The Future of Video Streaming Protocols: Trends for 2025–2026

The protocol landscape is shifting. Here are the trends shaping how video will be transported over the next 12 to 24 months.

WHIP adoption is accelerating. IETF standardization gave WHIP legitimacy. OBS support gave it reach. By late 2026, expect most professional live streaming platforms and browser-based production tools to support WHIP ingest natively. Browser-based broadcasting without encoder software is becoming a standard workflow, not an edge case.

Low-Latency HLS is becoming the default delivery mode. Apple’s LL-HLS specification is closing the latency gap between standard HLS and real-time protocols. CDNs are building native LL-HLS support, making sub-5-second delivery scalable for large audiences. The days of 30-second HLS delay are numbered for most use cases.

SRT is replacing RTMP for professional contribution. As SRT Alliance membership grows past 600 organizations and encoder support expands, SRT is becoming the preferred ingest protocol for professional and remote production workflows. RTMP will remain the standard for social platform ingest — YouTube, Facebook, and Twitch still require it — but for the first mile from venue to cloud, SRT is winning.

AV1 codec adoption is influencing protocol choices. The royalty-free AV1 codec offers 30–50% better compression than H.264, according to the Alliance for Open Media. Protocols and platforms that support AV1 — WebRTC/WHIP and newer HLS implementations — will have a meaningful efficiency advantage for delivering video at scale. This matters most for OTT platforms and high-volume broadcasters where bandwidth costs are significant.

Multi-protocol ingest is becoming standard. Professional platforms increasingly support RTMP, SRT, WHIP, and HLS PULL ingest at the same time. This flexibility lets broadcasters choose the best protocol for their specific network conditions and use case — rather than being forced into a single option. The streaming world is moving toward protocol flexibility, not protocol lock-in.

Conclusion

Video streaming protocols help your video reach viewers fast and safely. Each protocol has a clear role in delivery. They control how video moves from your encoder to your audience.

Platforms like Castr support many key protocols. These include HLS Pull, SRT Pull, RTSP Pull, and RTMP Pull. You can also stream using RTMP Ingest and SRT Ingest. These options give you more control and flexibility. You can send and receive streams without extra hassle.

Castr also offers strong core features for better streaming. It delivers low latency for near real-time playback. It uses adaptive bitrate for smooth viewing on any device. It adds security tools to protect your content from misuse.

Choose a platform that matches your needs and goals. Try Castr today and start streaming with more control and confidence.

Frequently asked questions

Can’t find it here? Check out our Help Center.

-

What is the difference between RTMP and HLS?

RTMP is an ingest protocol used to send live video from an encoder to a streaming server. HLS is a delivery protocol used to send video from a server to viewers. Most live streaming workflows use RTMP for ingest and HLS for delivery. RTMP operates over TCP with low latency. HLS uses HTTP with adaptive bitrate for scalable, device-compatible playback. They serve different legs of the same broadcast pipeline.

-

Is RTMP still used?

Yes. RTMP remains the most widely supported ingest protocol for live streaming. Every major platform — YouTube, Facebook, Twitch, LinkedIn — accepts RTMP ingest. All popular encoders including OBS, vMix, and Wirecast support it as a default output. Newer protocols like SRT and WHIP offer technical advantages for specific scenarios, but RTMP’s universal compatibility keeps it essential — especially for multistreaming to multiple platforms.

-

What is the best protocol for low-latency live streaming?

For ingest, WHIP (via WebRTC) offers the lowest latency at 200–500 milliseconds. SRT follows at sub-second to 2 seconds. For delivery, Low-Latency HLS achieves 2–5 seconds. Standard HLS has 15–30 seconds of latency. Low-latency streaming is suited for interactive scenarios such as video calls, live betting, and real-time Q&A. The best choice depends on whether you need low latency on the ingest side, delivery side, or both.

-

Can I use SRT for live streaming to YouTube or Facebook?

YouTube and Facebook do not natively accept SRT ingest as of 2026. They primarily accept RTMP. However, you can use SRT to send your stream to a multistreaming platform that accepts SRT ingest and then redistributes it via RTMP to YouTube, Facebook, and other social destinations. This gives you SRT’s reliability on the first mile while maintaining compatibility with every platform.

-

What protocol does OBS Studio use for streaming?

OBS Studio supports RTMP (default), SRT, and WHIP for live stream ingest. RTMP is the most commonly used because it’s accepted by all major streaming platforms. SRT is available for users who need better performance over unreliable networks. WHIP support was added in OBS version 30 for ultra-low-latency browser-server ingest.

-

What is the difference between SRT and RTMP?

SRT uses UDP with ARQ error correction. It offers better performance on unreliable networks, native AES encryption, H.265 codec support, and adjustable latency. RTMP uses TCP. It offers universal platform compatibility but no native encryption (requires RTMPS), is limited to H.264, and is less resilient on congested networks. SRT is technically superior for contribution. RTMP is more widely supported by social streaming platforms.

-

Do I need different protocols for ingest and delivery?

Yes, in most professional setups. Video streaming protocols are categorized by their use for ingest (sending video to a server) or delivery (sending video to viewers). Ingest protocols like RTMP, SRT, and WHIP are optimized for sending video from your encoder to a server. Delivery protocols like HLS and DASH are optimized for distributing video from a server to thousands or millions of viewers with adaptive quality. A streaming platform handles the conversion between ingest and delivery protocols automatically.

-

What streaming protocol should I use for multistreaming?

RTMP is the recommended protocol for multistreaming to multiple social platforms. It is the only ingest protocol universally accepted by YouTube, Facebook, Twitch, LinkedIn, Twitter, and custom RTMP destinations. You send a single RTMP stream to a multistreaming service, which redistributes it to all your chosen platforms without requiring separate streams for each.